Some time ago I claimed that the JPA 2.0 metamodel API has the potential to revolutionize Java development.

I still think that the concept is very interesting by showing an approach to strongly typed meta programming in Java. However I think it does not have any relevance in real world projects. One reason is that strongly typed JPA criteria queries are very verbose and bring their own accidental complexity compared with JPQL. The other reason is the actual usage of an annotation processor in any build environment is still too complicated.

In the following I show how to configure the JPA metamodel annotation processor of EclipseLink for different environments. A working example for this is exercise 6.3 in our jpaworkshop.

Maven

EclipseLink documentation is (once again) lacking, ignoring the reality that Maven is currently the most prominent build environment in the enterprise.

Fortunately this is well documented here and here.

You need to configure a maven processor plugin in the pom that triggers the annotation processor in the generate-sources phase:

There are different maven processor plugins. I am using maven-annotation-plugin, an alternative is the Apt Maven Plugin.

Now Maven was easy, lets tackle the IDEs...

NetBeans

NetBeans excels in this task. When opening the Maven pom, it automatically recognizes the above configuration with the maven-processor-plugin and configures itself to use the EclipseLink annotation processor:  No additional configuration whatsoever needed! Metadata API classes get generated on the fly with each compilation and even with background compilation ... I wish NetBeans was my favorite IDE :-)

No additional configuration whatsoever needed! Metadata API classes get generated on the fly with each compilation and even with background compilation ... I wish NetBeans was my favorite IDE :-)

IntelliJ IDEA

Configuration of an annotation processor is nicely documented in this post by JetBrains.  The good things:

The good things:

- annotation processors get picked up from the classpath, you dont have to specify the jar (which is a good thing, since the jar name might change when updating the version)

- In combination with IDEA the EclipseLink annotation processor detects the default META-INF/persistence.xml automatically without explicit configuration.

The bad thing:

- You need to know the exact full qualified name of the annotation processor class.

- Generation of JPA metadata API classes works only on compilation (or on explicitly triggering annotation processing). It does not work on the fly when editing files, since IDEA does not have real background compilation.

Eclipse IDE

One might be tempted to think that configuration should be especially easy since Eclipse IDE and EclipseLink imply some kind of close relation...

The EclipseLink documentation explains how to configure the annotation processor ... except it does not work:

In combination with Eclipse the EclipseLink annotation processor does not detect the default META-INF/persistence.xml. You have to configure it manually. This is not documented and not trivial. The problem is reported as bug, but the bug was closed without fixing the problem! I wonder how many people gave up on using the metadata API just because of that shortcoming...

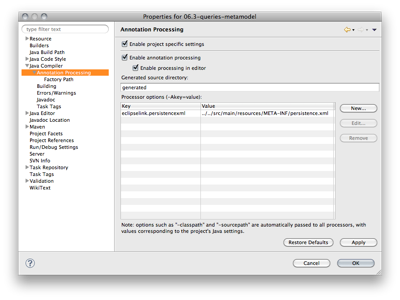

Here is how to configure the EclipseLink annotation processor in Eclipse:

As described in the documentation you have to include three jars on the factory path of the annotation processing configuration:

This approach is bad from the beginning, since those jars might change their name when you update them and they might not versioned together with your sources (this is the case when using Maven) . The approach of IDEA of locating annotation processors in the classpath by their class name is much better in this regard.

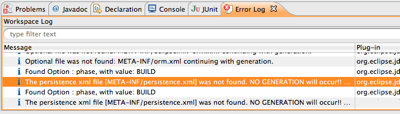

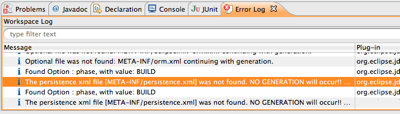

But as mentioned above, this does not yet work. You get the following error written in the eclipse error log:

The persistence xml file [META-INF/persistence.xml] was not found. NO GENERATION will occur!! Please ensure a persistence xml file is available either from the CLASS_OUTPUT directory [META-INF/persistence.xml]

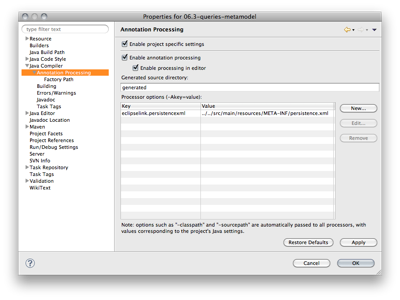

The solution is to pass the persistence.xml explicitly to the annotation processor. This is achieved by configuring an annotation processor option. The key is eclipselink.persistencexml. The value is the path to your persistence.xml relative from your CLASS_OUTPUT directory. In case of using Maven, your CLASS_OUTPUT directory is target/classes, so you have to prepend ../../ to your path to arrive in the project root directory ... not trivial indeed ... (note that the path separator might vary on Windows)

Finally generation of JPA metadata API classes is also working in Eclipse. With the great background compilation of Eclipse it is nicely working on the fly when editing files.

On the first week I got a invitation to a DropBox share from somebody I actually do not know (probably a higher semester student) which contains over 1GB of "semi-official" studying material (solutions for exercises, example examinations, additional material ...)

On the first week I got a invitation to a DropBox share from somebody I actually do not know (probably a higher semester student) which contains over 1GB of "semi-official" studying material (solutions for exercises, example examinations, additional material ...)

There is a common claim that we should learn more from classical engineering disciplines like civil engineering. According to that claim the IT industry would be a better place if we would adopt best practices from the latter.

There is a common claim that we should learn more from classical engineering disciplines like civil engineering. According to that claim the IT industry would be a better place if we would adopt best practices from the latter.